Programming Languages: Why Java Is Quietly Becoming an AI Platform

What I Learned at JavaFest'25 About Java's AI Future

I walked into JavaFest’25 expecting the usual: framework announcements, performance benchmarks, maybe some Spring Boot 4.0 teasers. I was wrong. Within the first session, it became clear that Java wasn’t just adopting AI—it was reimagining itself as an AI-native platform.

TL;DR: Java isn’t just supporting AI—it’s reimagining itself as an AI-first platform. Six emerging technologies (Spring AI, MCP, RAG, Micronaut, GraalVM, SLMs) are reshaping how enterprise systems will reason, decide, and learn.

Across the sessions, the message was unmistakable:

Java is not just adapting to AI — it’s architecting it.

From AI-powered development workflows to embedding language models in Java applications, the event showcased how Java is reinventing itself as the platform for intelligent systems — not just enterprise applications.

In this piece, I’ll walk you through my curated learning journey from JavaFest’25 — six sessions I attended, that together painted the evolution of Java from backend workhorse to AI-native ecosystem.

Whether you’re a backend engineer, architect, or CTO — if Java powers your stack, this matters. The Java ecosystem just articulated how it will dominate AI infrastructure for the next decade.

1. From Prompts to Agents: Supercharging Spring Boot with AI and MCP

The session redefined how we think about integrating AI into enterprise Java.

Imagine delegating API calls, database queries, and business logic decisions to an AI agent — while you maintain complete control. That’s what MCP + Spring Boot enables.

The key enabler here is MCP (Model Context Protocol) — a standardized way for AI systems to communicate with tools, databases, and APIs.

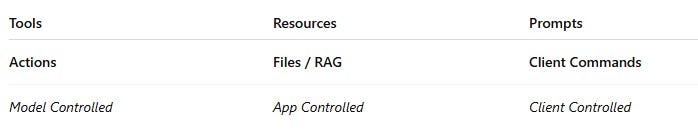

The speakers introduced the control layers in MCP:

It illustrated the three-tier control model — from model autonomy to user-driven commands — and how MCP orchestrates that hierarchy.

A fascinating concept was Predefined Prompts in MCP — reusable, declarative prompt templates embedded within application logic, allowing consistent agent behavior across sessions.

My Takeaway:

Spring Boot + MCP enables context-rich, prompt-defined, and tool-aware AI microservices. It’s a natural evolution toward programmable reasoning systems — a concept central to Agentic AI architectures.

2. MCP Internals, Security, and Cloud Deployments

If the previous session introduced the concept, this one went deep into the plumbing of MCP.

From secure context handling to cloud deployment models, the session emphasized how MCP provides controlled autonomy through defined tool boundaries - an AI agent can autonomously decide which tools to call, but only from a pre-approved set that you’ve explicitly exposed, ensuring intelligent decision-making within safe guardrails.

MCP is built on JSON-RPC, a lightweight protocol that treats AI tool interactions as simple function calls.

It revolves around three core calls:

initialize and identify capabilities

get_tools

invoke_tools

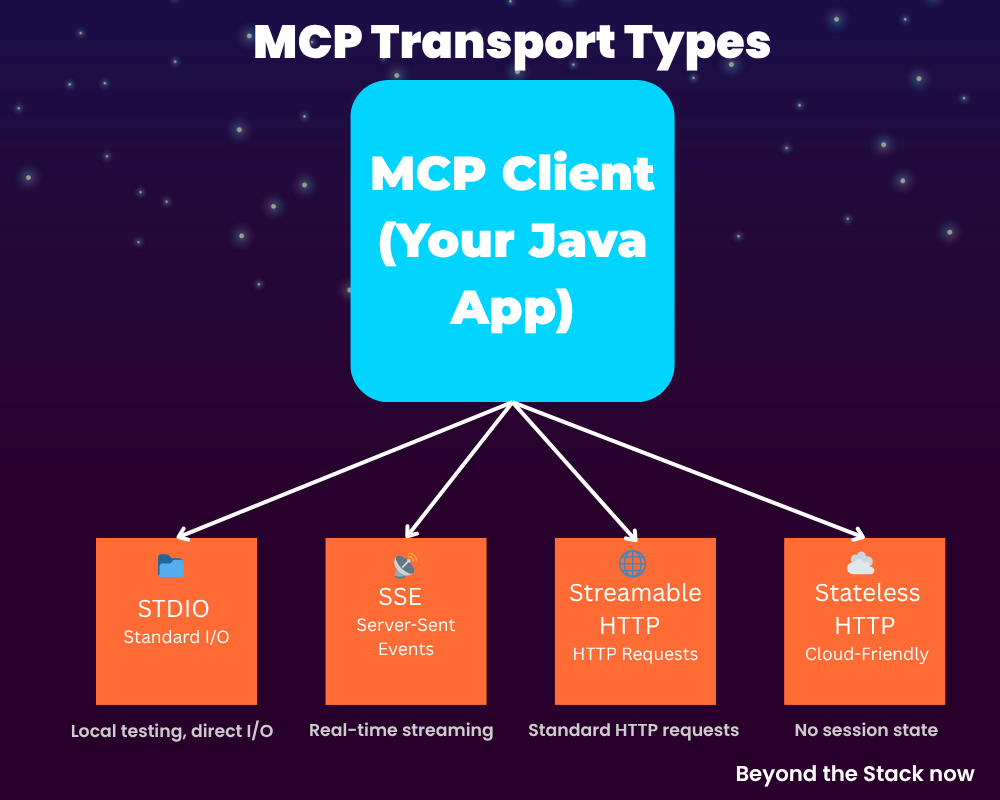

Depending on deployment needs, there are four MCP transport types. Think of them as different ways for your Java application and AI agent to ‘talk’:

STDIO — Direct communication over standard input/output (simplest, local testing)

Server-Sent Events (SSE) — Real-time streaming from server to agent

Streamable HTTP — Standard HTTP requests (most familiar to Java devs)

Stateless Streamable HTTP — HTTP without maintaining session state (cloud-friendly)”

Security-wise, it uses JWT-based authentication, seamlessly aligning with existing Spring Security infrastructure.

What impressed me most — the demo showed VS Code with GitHub Copilot (Agent Mode) acting as an MCP client, directly testing a custom MCP server. This is AI collaborating with AI: the developer’s assistant informing the production system’s behavior.

My Takeaway:

MCP feels like the “REST for AI Agents”

It’s a protocol that will make distributed, interoperable intelligence possible.

3. RAG (Retrieval-Augmented Generation) in Java

With MCP providing the control layer for AI agents, the next challenge becomes: how do you feed those agents the right information without exposing your organization’s sensitive data?

Picture this: Your customer support AI needs to answer questions about your proprietary SaaS product. You can’t afford to fine-tune an LLM (expensive), and you won’t send customer data to OpenAI (liability risk). RAG solves both problems.

RAG is a practical pattern for grounding AI reasoning in private, enterprise context. It combines local context retrieval with LLM reasoning — avoiding costly and privacy-risky fine-tuning. This also solves the problem of hallucination.

This session showcased practical RAG implementations using Java (LangChain4j) and a vector database, bridging:

Vector embeddings

Knowledge stores

Java-based retrieval layers for grounded responses

The speakers compared:

Fine-tuning → Expensive, static, retraining-heavy

RAG → Dynamic, low-cost, data stays private

My Takeaway:

For teams hesitant about AI adoption, RAG offers a privacy-first entry point. You can enhance applications with AI reasoning without moving sensitive data to cloud infrastructure.

With tools like LangChain4j, Java developers can implement context-aware AI systems while keeping data sovereignty intact.

4. The Need for Speed: Java Microservices Powered by Micronaut & GraalVM

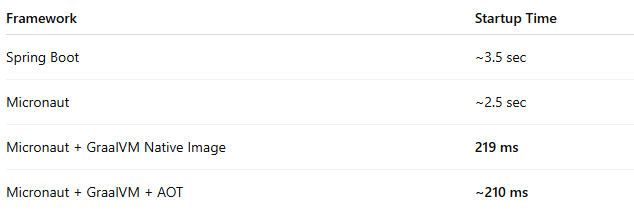

RAG solves the data problem, but there’s another silent killer in production: latency and performance. As Java teams scale AI workloads, cold starts and memory overhead become real bottlenecks. This session revealed how modern Java frameworks, Micronaut along with GraalVM are solving this, enabling AI microservices that respond with the speed enterprise systems demand

The session broke down why Spring Boot suffers from cold start delays — mainly due to reflection-heavy dependency injection and runtime class path scanning.

Micronaut’s approach:

Precomputed DI (Dependency Injection)

AOT Compilation (Ahead of Time)

No runtime reflection overhead

The numbers are staggering: Micronaut applications start in milliseconds compared to Spring Boot’s seconds. At scale, this difference translates to millions in infrastructure costs.

Micronaut applications launch in milliseconds, while GraalVM slashes memory consumption.

Micronaut also integrates natively with Prometheus and Grafana, making observability first-class.

My Takeaway:

If Spring Boot is the “brains,” Micronaut + GraalVM is the “brain + muscle” — enabling Java systems that can scale with the agility of modern latency-sensitive AI workloads.

5. Thriving in the Age of AI-Powered Software Development

With infrastructure optimized for speed, the focus shifted from how we build to what we’re actually building. The conversation moved beyond frameworks and into philosophy — how does the role of the developer change when AI becomes your co-creator?

This session took a philosophical yet practical look at the changing role of developers in an AI-first world. AI isn’t replacing programmers; it’s transforming how we think and what we focus on.

The concept of Spec-Driven Development (SDD) was particularly striking — a pipeline of AI-assisted product creation driven by structured specifications.

The SDD Flow:

Generate high-level requirements

Derive APIs and expected user behaviors

Expand each API into detailed specs

Auto-generate tests and code

Tools in the ecosystem: Spec-Kit, Kiro, Tessl, AGENTS.md

For AI-powered development, he highlighted:

Spring AI, LangChain4j, Embabel, Koog, and Google ADK.

My Takeaway:

your job shifts from writing every line of code to structuring specifications

The new developer superpower isn’t prompt engineering—it’s information architecture. As AI becomes your co-creator, your job shifts from writing every line of code to structuring specifications so AI can compose high-quality implementations from intent.

6. Embedding Small Language Models (SLMs) in Java for Mobile & Edge Devices

While most discussions centered on cloud-scale systems and large language models (LLMs), the final session revealed that intelligent systems aren’t just living in data centers anymore.

The implications are profound: AI reasoning isn’t confined to data centers anymore. Your mobile app, IoT device, or local microservice can make intelligent decisions independently—without cloud latency, vendor lock-in, or privacy compromise.

Key technologies discussed:

SLMs bring:

Low latency (local inference)

Improved privacy (no cloud dependency)

Energy efficiency

Applications discussed:

Offline assistants

Edge decision systems for IoT

Local AI in microservices for privacy and latency control

My Takeaway:

This is the next frontier — distributed, self-contained agents running closer to the user, combining the power of AI reasoning with Java’s portability.

It completes the circle: From cloud-hosted intelligence to edge-embedded autonomy.

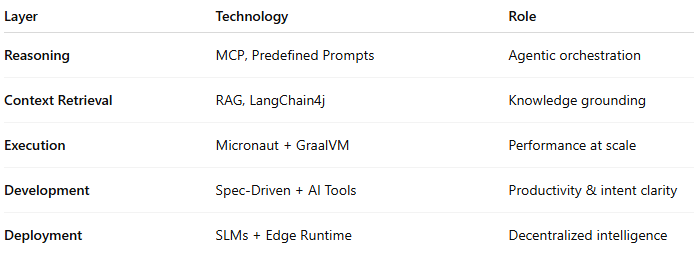

The Bigger Picture

Java’s evolution is now unmistakable — it’s transforming from a backend runtime into a distributed intelligence platform.

Java is no longer just the language of business logic — it’s becoming the language of intelligence.

💬 What’s your take?

Do you see Java emerging as the AI platform in your organization? Or is your stack moving in a different direction?

Drop a comment below — I’m genuinely curious what resonated most from these six sessions.

Closing Reflection:

JavaFest’25 revealed a fundamental shift: Java isn’t adopting AI as a feature—it’s architecting itself as an intelligence platform.

🔄 Know someone building AI systems in Java?

Share this with your team or your network. The conversation about Java’s AI future is just beginning, and these insights will matter for architecture decisions happening right now.

With Spring AI, MCP, RAG, Micronaut, and SLMs, the ecosystem has articulated a complete vision—intelligent systems that scale from cloud to edge, from enterprise to mobile, from centralized reasoning to distributed autonomy.

In the decade ahead, Java’s 30-year legacy of portability and type safety will merge with AI’s adaptive intelligence. The result? Trustworthy, scalable intelligent systems built on a foundation that enterprises already trust.

#Java #AI #SpringBoot #MCP #RAG #Micronaut #GraalVM #SLM #EdgeAI #SystemDesign #BeyondTheStack #JavaFest25

Author’s Note

If you’re an architect, engineer, or engineering leader exploring how AI will reshape your Java stack, you won’t want to miss what’s coming next.

I’m working on a comprehensive deep-dive: ‘Designing Agentic Architectures in Java: From MCP to RAG’ — where I’ll walk through real-world patterns, decision trees, and code examples for building production-grade AI systems.

❤️ Found this valuable?

If this article gave you a new perspective on Java’s AI direction, I’d appreciate if you:

Liked this post to help it reach more engineers

Shared it with colleagues who care about Java architecture

Subscribed to get the deep-dive on Agentic Architectures (coming soon)

What to expect from Beyond the Stack:

Weekly insights on building intelligent, scalable systems

Deep-dives on emerging Java + AI technologies

Practical architecture patterns for enterprise teams

Follow along.

Series note:

This article is part of my Programming Languages series, where I explore how languages evolve, why myths persist, and what actually matters in real-world systems.

One question that kept running through my head during JavaFest'25:

If Java becomes the primary platform for AI systems, what does that mean for Python and ML engineers?

Will we see a convergence where backend teams own the AI layer, or is there still a clear separation?

Curious what architects reading this think -

Are you planning to build AI systems in Java, or sticking with Python-based stacks?