AI in Production: The Operational Failures No One Mentions in Model Benchmarks

Model accuracy is rarely the real problem. Prompt drift, data drift, latency spikes, and token costs are the operational failures quietly breaking production AI systems.

The biggest reason AI systems fail is not the models themselves.

It is due to the bad operations.

After 20+ years building distributed systems, one pattern keeps repeating: systems rarely fail because the core algorithm is wrong. They crumble under weak operational layers. AI systems are no different.

Welcome to Beyond the Stack Now, where each article explores engineering decisions and the why behind the tech stack, not just the tools themselves.

For March 2026, the focus is on Operational Efficiency and Scaling, and this week is focused on the AI & Agents. Subscribe to this newsletter so that you don’t miss any edition.

Since last month, I've structured each month around a single core theme, explored through the lenses of Code, AI, and System Design. Does this format work for you, or would you prefer something else?

Previous Read

Why Most AI Systems Fail Operationally?

Most conversations about AI failure start at the wrong layer.

When an AI system produces poor results, the first reaction is usually to blame the model. Engineers look at the architecture, the training data, the prompts, or the hyperparameters. The assumption is that the intelligence of the model is insufficient.

In practice, that is rarely the real reason.

Most AI systems fail not because the model is weak, but because the operational system around the model is fragile.

A fraud detection model passed all standard health checks (latency, throughput, errors), yet fraud doubled due to invisible model drift. Failures stem from fragile operations: monitoring gaps, unchecked costs, drift mismanagement, and shaky reliability. Production environments shift constantly, yet teams treat AI as static. This is operational excellence or the lack of it

The Illusion of AI Success

In controlled environments, AI systems often perform extremely well.

A notebook prototype produces accurate responses.

A demo created during a hackathon works smoothly.

A proof-of-concept integrated with a limited dataset performs reliably.

Then the system is deployed into production.

Latency suddenly increases. (now a top issue for 53% of AI projects )

Costs become unpredictable.

Outputs become inconsistent.

Users start reporting incorrect answers even though dashboards show no clear failures.

85% of organizations misestimate AI costs by over 10%, with 25% off by 50%+ as pilots scale.

The model itself has not changed.

The environment around the model has.

The gap between a successful demo and a reliable AI product is almost always operational maturity.

AI Systems Are Not Just Models

A production AI system is not a single endpoint calling a model.

It is an operational pipeline.

A typical AI request path often looks like this:

→ User request

→ Prompt construction

→ Context retrieval (RAG or data sources)

→ Model inference

→ Output validation

→ Safety checks

→ Cost accounting

→ Monitoring and logging

Every stage introduces operational risk.

Prompt construction may introduce conflicting instructions.

Context retrieval may return outdated information.

Inference latency may spike during load.

Safety filters may silently remove useful information.

Token usage may increase costs unexpectedly.

The model is only one component in a much larger chain.

Operational reliability determines whether the entire chain holds.

The Hidden Operational Challenges of AI

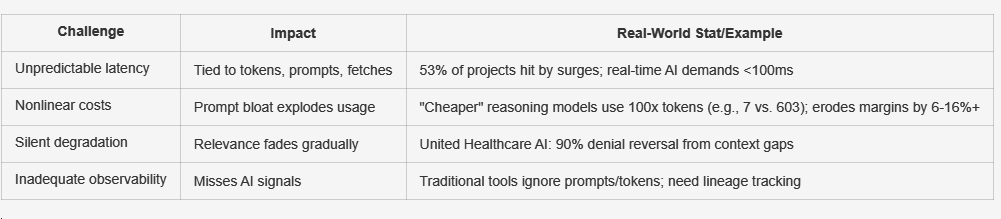

Traditional backend systems already have operational complexity. And AI systems amplify it.

Latency becomes harder to predict because inference time depends on token length, prompt size, and external data retrieval.

Costs scale differently. Each request consumes tokens, and poorly designed prompts can multiply token consumption without improving results.

Quality degradation often happens silently. Instead of obvious failures, responses gradually become less relevant or less accurate.

Traditional observability systems are also insufficient. CPU usage, error rates, and request latency are not enough to evaluate an AI system. You also need visibility into prompts, tokens, context sources, and response quality.

Without these signals, operational problems remain invisible.

If you are building AI-enabled products or operating AI pipelines in production, share your experience with the community.

Operational lessons from real systems are far more valuable than theoretical discussions.

Prompt Drift and Data Drift: The Quiet Degraders

Many AI failures appear gradually rather than suddenly. They emerge as the system’s inputs evolve.

Two common causes are prompt drift and data drift.

Prompt drift occurs when prompts change incrementally over time. Teams modify prompts to improve responses, add new instructions, or support new features.

Small adjustments accumulate.

Additional instructions are added.

More context is inserted.

Formatting rules change.

Guardrails become longer and more complex.

Over time, the prompt becomes larger and harder for the model to interpret consistently.

Token consumption increases.

Latency grows.

Conflicting instructions reduce output reliability.

Without versioning and evaluation, prompt changes can introduce regressions that remain unnoticed.

Prompt drift is essentially configuration drift for AI systems.

Data drift occurs when the external data environment changes.

Documentation evolves.

Product catalogs expand.

User behavior shifts.

New query patterns appear.

The model remains unchanged, but the input distribution shifts, which gradually reduce output accuracy.

Unlike traditional failures, drift rarely produces immediate alerts. The system continues running while the response quality slowly declines.

A Simple Example: Bengaluru Traffic

Consider traffic patterns in Bengaluru.

No AI system can permanently “solve” traffic prediction using a static dataset.

The environment changes constantly.

A new flyover opens.

A metro corridor expands.

Construction blocks a major road.

Schools reopen after the holidays.

Rain changes commuting behavior.

Events or festivals alter traffic patterns.

The traffic conditions that existed last month (or even last day) may not exist today.

If a model were trained on historical traffic patterns and deployed without continuous updates, its predictions would gradually become less reliable.

The model itself would not necessarily be incorrect.

The world it was trained on would simply no longer exist.

This is a practical example of data drift. Real-world environments change faster than most systems are designed to adapt.

Operational Excellence for AI Systems

Organizations that successfully deploy AI treat it as a systems engineering problem, not just a modeling problem.

Operational excellence typically requires four disciplines, backed by tools like Galileo (80% human eval match), Comet Opik (failure traces), and SageMaker Model Monitor (drift schedules):

Prompt and context governance ensures that prompts are versioned, evaluated, and monitored in the same way as production configurations.

Evaluation pipelines continuously test responses against benchmark prompts or datasets so regressions are detected early.

Cost observability tracks token usage, cost per request, and prompt inflation trends so AI workloads remain economically sustainable.

AI-specific observability measures signals such as hallucination probability, response relevance, context retrieval accuracy, and user correction behavior.

These capabilities transform AI from a fragile feature into a reliable system component.

Google's Vertex AI powers logistics AI, cutting diagnosis from 15 minutes to 10 seconds (98% cost drop). This turns fragile features into reliable components.

The Emerging Pattern

Across many production deployments, a consistent pattern is appearing.

Successful teams spend less time tuning models and more time engineering the operational layer around them.

The competitive advantage is not always the smartest model. (Even LangSmith faced a 55% API outage from cert drift on May 1, 2025)

It is the most reliable AI system.

The Shift AI Teams Must Make

Much of the AI industry narrative focuses on model capability.

Larger models.

More parameters.

Higher benchmark scores.

Production systems require something different.

They require operational discipline.

Modern AI engineering sits at the intersection of multiple disciplines:

Software engineering

Site reliability engineering

Data engineering

Model engineering

Operational excellence is the layer that connects them.

Final Thought

The next wave of AI innovation will not come only from more powerful models.

It will come from teams that understand how to operate AI systems reliably under real-world conditions.

In production environments, intelligence is valuable only when it is dependable.

Most AI systems do not fail because their models are weak.

They fail because the system around the model is operationally fragile.

If you found this perspective useful and want more deep dives into system design, reliability, and real-world engineering patterns:

Subscribe to Beyond the Stack for weekly essays on AI systems beyond the happy path.

Like if this made you uncomfortable (in a good way)

Restack to help others rethink “working” systems.

Share it with your team before the next AI System failure.

Comment with where you’ve seen AI fail quietly in production.

Related reading

Breakpoints of a Career: a short e-book on the invisible moments where careers bend, stall, or accelerate.

If this article resonated because you’ve seen systems (or careers) fail quietly, you may find it useful.

Read Next

Series Note

This article is part of my March series on Operational Excellence, where I examine how modern systems fail in production and how engineers can design for reliability from the start. This article is part of my February series on reliability as a design choice.

It also sits at the intersection of two ongoing tracks on Beyond the Stack now:

AI Systems — how models behave in production, why probabilistic systems change design assumptions, and where common myths break down.

Operational Excellence — how efficiency, cost, and predictability are designed (or ignored) long before dashboards start showing problems.

Explore the full AI series

https://beyondthestacknow.substack.com/t/ai

Explore the full Reliability and Resilience Engineering Series

https://beyondthestacknow.substack.com/t/operational-excellence

References

AI Cost Overruns Survey: 85% misestimate by >10%, 25% by 50%+. https://www.cio.com/article/4064319/ai-cost-overruns-are-adding-up-with-major-implications-for-cios.html

LangSmith Incident (55% API Failure): Cert drift caused 55% errors for 28 minutes. https://blog.langchain.dev/langsmith-incident-on-may-1-2025/

Vertex AI Logistics Case: 15min→10s diagnosis (98% cost drop). https://cloud.google.com/transform/101-real-world-generative-ai-use-cases-from-industry-leaders